You built a pretty good query understanding solution. It’s an improvement.

You have to ship tomorrow

One problem, the query: purple mattress.

Turns out that’s not a mattress colored purple. It’s a brand named purple. But our otherwise smart query understanding solution sees purple as a color.

You have to ship tomorrow. And this is a pretty popular query.

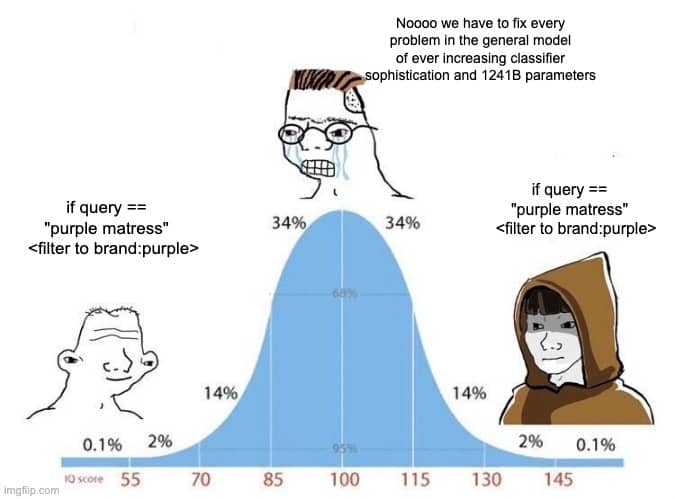

Do you

(a) Do the right ML thing: try to train a better model to fix it?

(b) Just accept models will be imperfect and add manual exceptions?

The more I work in search, the more I accept (b). In other words:

We can always make models a bit better, but they’re not perfect. A 99% correct model still has users in the 1% frustrated case. Instead of bending the models backwards to solve one-off problems, we need an escape valve. A safety hatch. A way to take pressure off models with simple rules.

-Doug

This is part of Doug’s Daily Search tips - subscribe here

Enjoy softwaredoug in training course form!

Starting May 18!

Signup here - http://maven.com/softwaredoug/cheat-at-search I hope you join me at Cheat at Search with Agents to learn use agents in search. build better RAG and use LLMs in query understanding.

I hope you join me at Cheat at Search with Agents to learn use agents in search. build better RAG and use LLMs in query understanding.