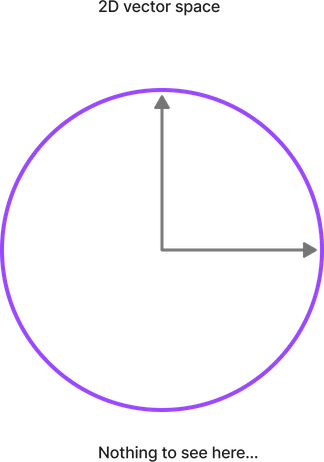

One place 2D analogies of vector search breaks down: orthogonality.

In 2D there’s little special about orthogonality: two normal vectors will just as likely be at 90 degrees as 180 degrees.

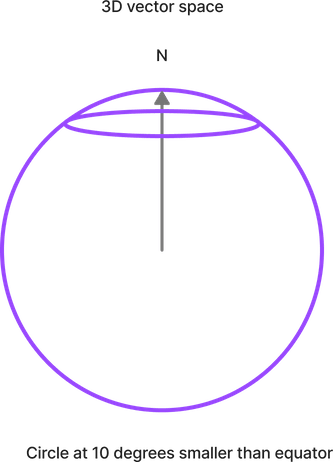

But move up to 3D. There’s more space at 10 degrees than 90 degrees.

Now imagine 768 Dimensions! Orthogonality becomes even more the norm.

Why does this matter? In embeddings, orthogonally means the opposite of similarity. As we add dimensions, similarity becomes less likely.

Or, in other words, we add precision to embeddings with more dimensions. Similarity occurs rarely, but hopefully more accurately. But add too many dimensions, it may not happen at all. A classic precision / recall tradeoff.

More here https://softwaredoug.com/blog/2022/12/26/surpries-at-hi-dimensions-orthoginality

-Doug

This is part of Doug’s Daily Search tips - subscribe here

Enjoy softwaredoug in training course form!

Starting May 18!

Signup here - http://maven.com/softwaredoug/cheat-at-search I hope you join me at Cheat at Search with Agents to learn use agents in search. build better RAG and use LLMs in query understanding.

I hope you join me at Cheat at Search with Agents to learn use agents in search. build better RAG and use LLMs in query understanding.