After retrieving BM25 (or any) ranked search results, you might not realize it, but you have new information about the query - the search results themselves!

One early discovery in Information Retrieval:

- Compare what’s in the foreground (the initial top N results)

- … to the background corpus

- Then use that to improve the original query

How, exactly, becomes a series of design decisions. You could take any number of approaches:

- Take the embeddings of the top N results, use that to expand recall (as in Daniel Tunkelang’s Bag of Documents approach)

- Find terms that occur more frequently in the foreground, use those in search (as in Semantic Knowledge Graph, written about in AI Powered Search by Grainger et. al)

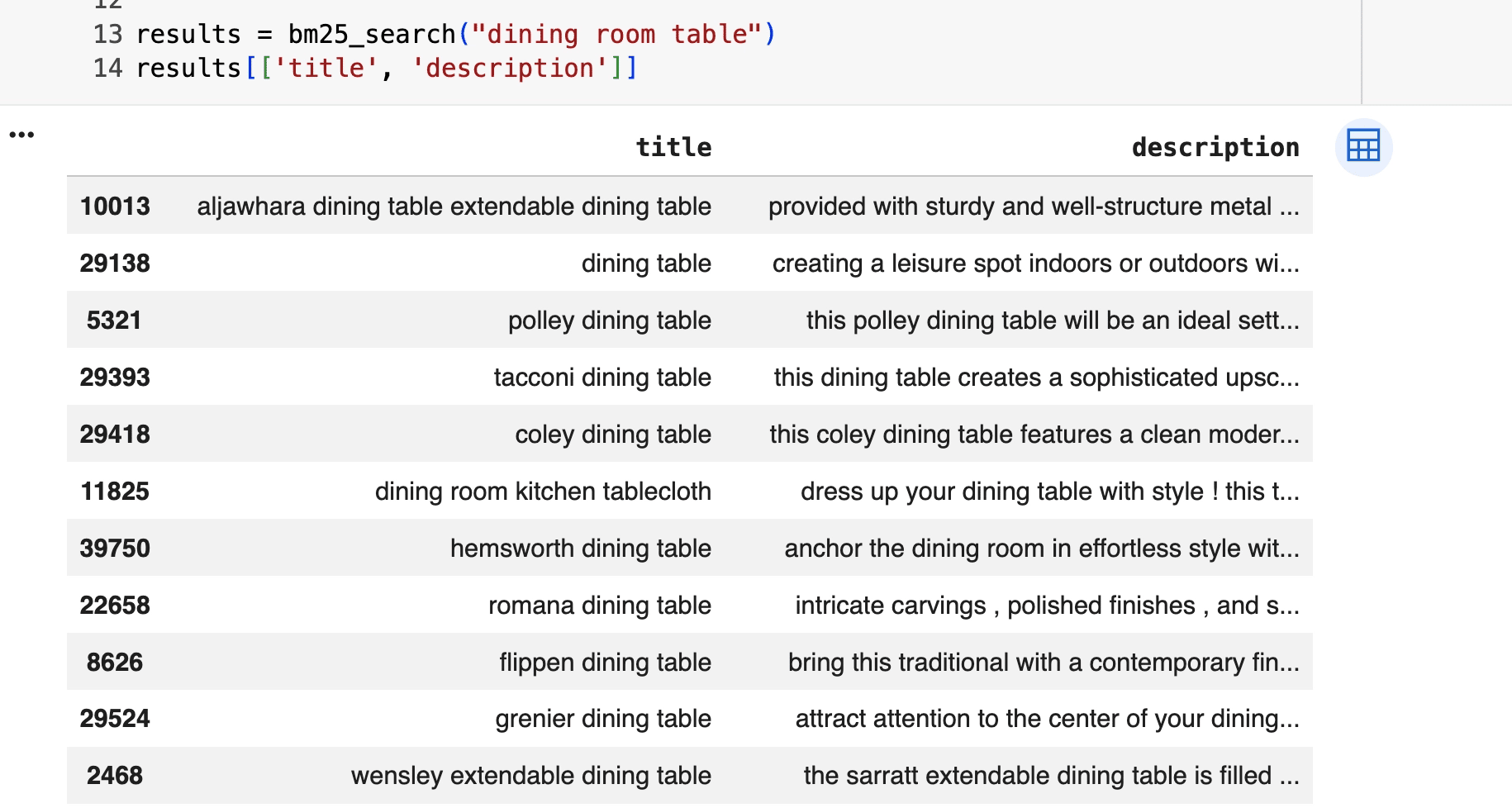

For example, from this semantic knowledge graph notebook, we retrieve some initial results for a “dining room table” query

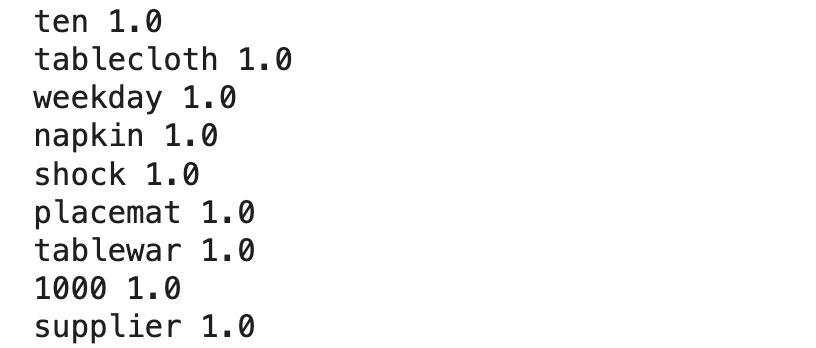

We then use those to score the most anomalous terms in the product description field:

Would these be useful queryexpansions? Above, we see the promise, and the challenges, of the approach:

- 👍 - the positive: related terms like placemat, tableware, tablecloth, etc

- 👎 - the negative: spurious terms like ‘ten’ likely occurs in dining room descriptions “seats ten” - but is this term really related to dining room tables?

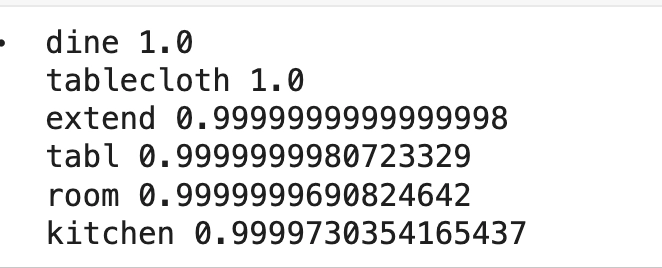

I chose the messiest field on purpose. A cleaner field like ‘title’ produces better candidate expansions:

If we’re so vulnerable to data quality, can this work?

Yes it can work. But only after good corpus hygiene. It’s another example of content understanding IS query understanding. There’s no free lunch. Clean, well organized content helps every layer of search quality, not just blind relevance feedback. We can then pick and choose which fields to use based on the information they convey.

There are other decisions. For example, should you weigh the foreground docs by relevance? Not all docs in the first pass are created equal after all. Or how do you handle thresholds? Should you only accept terms that occurred only a few times? Should you treat the background as a prior somehow and “move off” the background as evidence accumulates?

Take a look at my notebook. What ideas do you have to improve the relevance feedback?

-Doug

This is part of Doug’s Daily Search tips - subscribe here

Enjoy softwaredoug in training course form!

Starting May 18!

Signup here - http://maven.com/softwaredoug/cheat-at-search I hope you join me at Cheat at Search with Agents to learn use agents in search. build better RAG and use LLMs in query understanding.

I hope you join me at Cheat at Search with Agents to learn use agents in search. build better RAG and use LLMs in query understanding.